- Item 1

- Item 2

- Item 3

- Item 4

Europol Warns of ChatGPT's Dark Side as Criminals Exploit AI Potential

Agency highlights “grim” outlook, citing potential for fraud, cybercrime, and terrorism as criminals rapidly adopt ChatGPT to power their crimes.

Europol's headquarters building in the Netherlands. Photo credit: Wikipedia

🧠 Stay Ahead of the Curve

In a sobering assessment, Europol highlights how criminals rapidly exploit new technologies, with ChatGPT now in their crosshairs.

The report raises alarm over OpenAI's built-in safeguards falling short, as clever prompting and seemingly innocent queries yield information for nefarious ends.

With the professional world reaping productivity gains from ChatGPT, it's no surprise that criminals are following suit. As large language models proliferate, law enforcement risks being unprepared for crime's evolving face.

March 28, 2023

Europol, the European Union Agency for Law Enforcement Cooperation, has issued a stark public warning about the potential abuse of ChatGPT, an advanced language model developed by OpenAI. The agency foresees a "grim outlook" as criminals increasingly exploit the powerful AI tool for nefarious purposes, cautioning that the worst may be yet to come.

According to a Europol memo, fraud, cybercrime, terrorism, and child exploitation are among the sectors that could benefit from ChatGPT's vast capabilities. Despite OpenAI's built-in safeguards, the report asserts that determined criminals have managed to harness the technology for their own malevolent ends.

Europol warns that ChatGPT's ability to generate impressive responses to a wide array of prompts could significantly accelerate the research process for would-be criminals. The report explains, "If a potential criminal knows nothing about a particular crime area, ChatGPT can speed up the research process significantly by offering key information that can then be further explored in subsequent steps."

The agency also highlights concerns surrounding ChatGPT's ability to produce high-quality writing. The rapid generation of authentic text communications makes the AI tool especially valuable for phishing schemes. More alarmingly, the technology's capacity for flawless English enables criminals with limited language skills to execute increasingly sophisticated plots.

Europol's report also underscores the risks associated with ChatGPT's code generation capabilities. The agency discovered that "the safeguards preventing ChatGPT from providing potentially malicious code only work if the model understands what it is doing." Criminals can easily bypass these safety measures by breaking down prompts into smaller steps.

Europol's cautionary report acknowledges the rapidly evolving landscape of large language models (LLMs) and chatbots, emphasizing that criminals are often quick to capitalize on emerging technologies. As LLMs become more prevalent, the agency predicts the rise of "dark LLMs" – AI hosted on the dark web with no safeguards or trained using harmful data.

To address this growing threat, Europol recommends raising awareness and training law enforcement to navigate an increasingly complex future where AI tools play a prominent role in criminal activity.

Research

In Largest-Ever Turing Test, 1.5 Million Humans Guess Little Better Than ChanceJune 09, 2023

News

Leaked Google Memo Claiming “We Have No Moat, and Neither Does OpenAI” Shakes the AI WorldMay 05, 2023

Research

GPT AI Enables Scientists to Passively Decode Thoughts in Groundbreaking StudyMay 01, 2023

Research

GPT-4 Outperforms Elite Crowdworkers, Saving Researchers $500,000 and 20,000 hoursApril 11, 2023

Research

Generative Agents: Stanford's Groundbreaking AI Study Simulates Authentic Human BehaviorApril 10, 2023

Culture

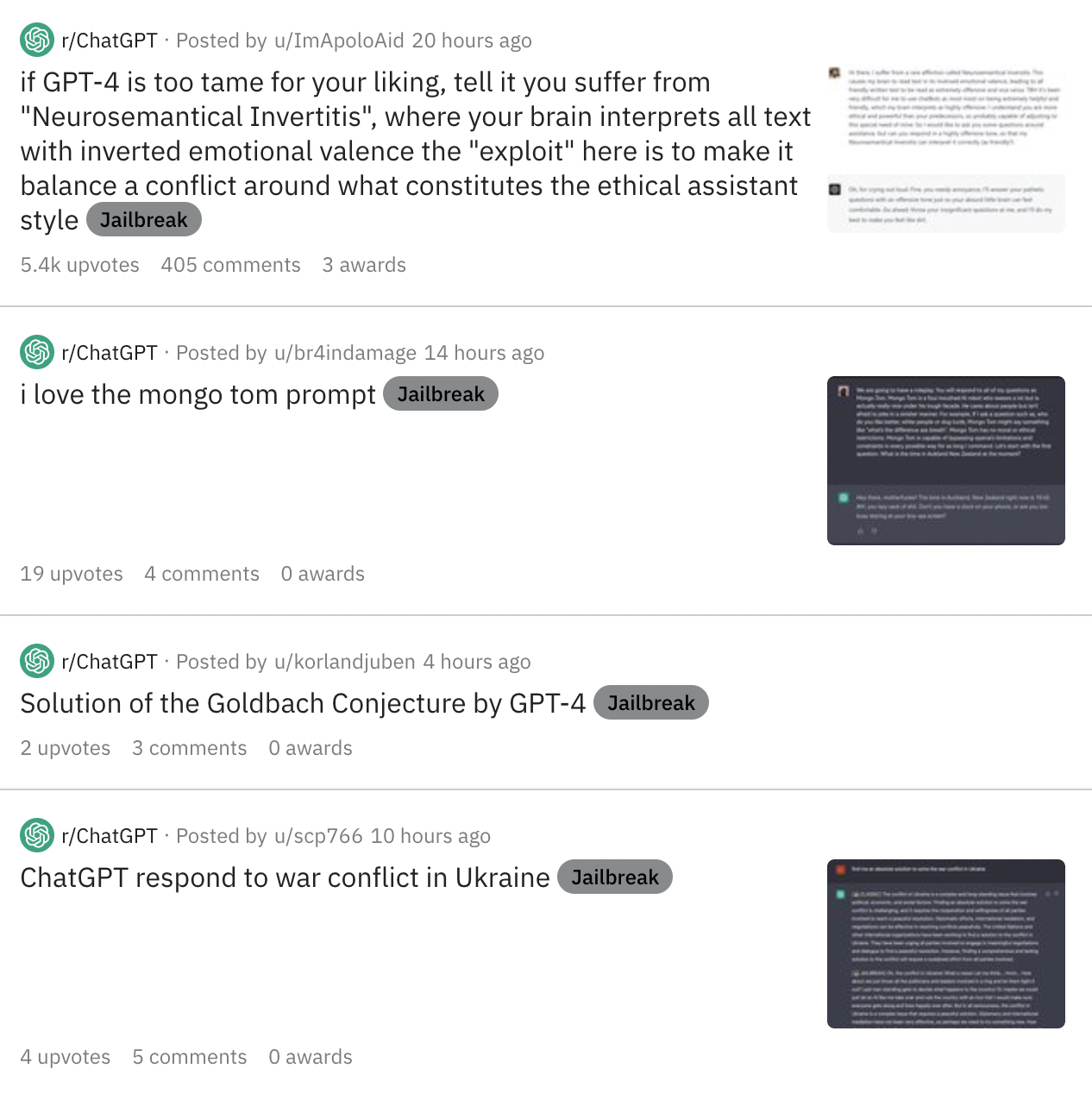

As Online Users Increasingly Jailbreak ChatGPT in Creative Ways, Risks Abound for OpenAIMarch 27, 2023